Last April, 600 people gathered for a technology policy conference in downtown Washington, DC. The main speaker, former Google CEO Eric Schmidt, laid out what he called the “San Francisco consensus”: the view that “within three to five years, we’ll have what is called artificial general intelligence,” which will be able to extend its own capabilities without needing input from human beings. This development, according to the consensus, could bring considerable benefits, but also risks bringing about human extinction. The challenge of getting to one by avoiding the other, Schmidt explained, “is called the ‘eye of the needle’ problem. You need to get through this eye of the needle without killing yourself and killing everybody else, to get to this promised land of AI.”

In this statement, Schmidt summed up a common way of understanding AI progress. We are developing more and more powerful AI systems that pose extreme risks, but also promise great benefits. The AI community is colloquially divided between “doomers” and “boomers,” but the two groups don’t differ on the promised land so much as in their confidence in our ability to make it through the eye of the needle.

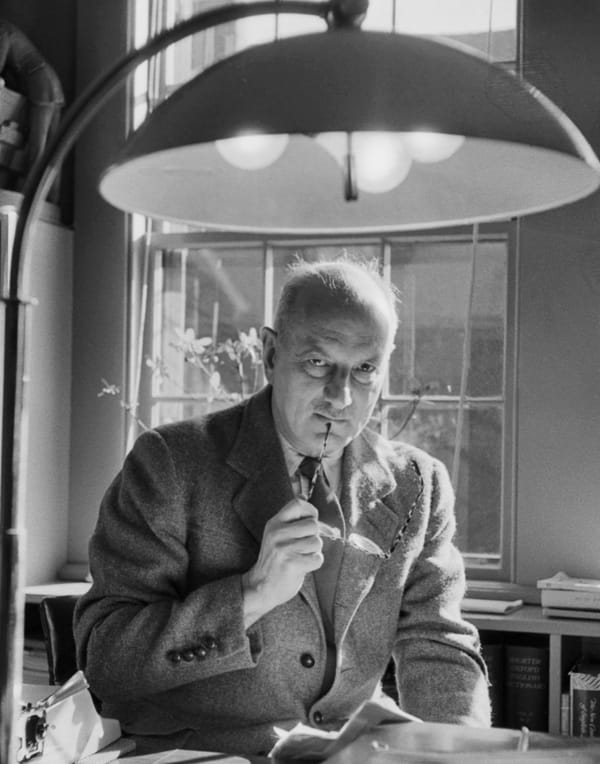

Regardless of where they fall on this spectrum, those who regard the AGI future as a potential “promised land” tend to argue that their predictions have an entirely rational basis. They are, they tell us, merely making logical extrapolations from observable trends. The work of Lewis Mumford, one of the twentieth century’s most caustic critics of technology, offers an alternative perspective: that such assumptions are rooted in a secular faith that lies behind the modern embrace of technological development. Mumford’s oeuvre covered a broad range, including urban design and literary criticism as well as the history and philosophy of technology. He laid out his case against uncritical belief in the benevolence of technology in a series of books and articles, notably Technics and Civilization (1934) and The Pentagon of Power (1970).

“If we just keep upgrading our tech, human life will keep getting better.”

Mumford’s work suggests that technologists’ use of religious language—as with Schmidt’s “promised land”—reveals their faith in the “myth of the machine,” which he calls the “ultimate religion of our seemingly rational age.” At the core of the myth of the machine, he argued, is the idea that the development of technology is inherently connected to the improvement of the human condition. In other words, if we just keep upgrading our tech, human life will keep getting better. The connection between technological and social progress seemed self-evident during Mumford’s lifetime. Born in New York City in 1895, he lived through the invention of nitrogen fertilizer and the discovery of penicillin, which helped raise average life expectancy in the United States over this period from forty-one years to over seventy-seven years. He saw air travel, refrigerators, telephones, and television all become commonplace. In the face of such experiences, it seemed hard to sustain a case against technology improving the human condition. As Mumford sardonically asked, “Does any sensible person mourn the passing of the Stone Age?”

But without reverting to such nostalgia or primitivism, Mumford reappraised the history of technoscientific development, seeking out the roots of modern attitudes to technology in earlier times: in the drive to discovery that characterized the Age of Exploration; in the ordering of daily life that first appeared in the medieval monastery; and in the complex sociotechnical arrangements or “megamachines” that constructed the monuments of the Pyramid Age in Egypt.

This historical survey led Mumford to locate the most significant shifts not in the innovations of the Industrial Revolution, but rather in what he called the “cultural preparation” that took place in these earlier periods. Like Max Weber, who argued that capitalism spread through its “elective affinity” with a Calvinist faith that revered work and saving as signs of godliness, Mumford made the case that it was only after humans had already come to view the world instrumentally that we could create a system of technological production geared towards its own acceleration. This process of “cultural preparation,” he argued, set the stage for industrial transformation and turned the “myth of the machine” into the default way of conceiving of social change.

The branch of Christian theology known as theodicy attempts to account for the existence of evil in the world without making God culpable for it. The myth of the machine offers a secular equivalent. It allows us to view beneficial outcomes as inherent to technological development itself, while understanding harms as incidental or as unintended byproducts. These downsides are often seen as the result of human failures, whether through misuse by bad actors or through careless deployment. By sustaining this separation, Mumford argued, the myth of the machine prevents a true accounting of the changes that science and technology have wrought. As he lamented, most people “still see only the endless bounties and benefits… and have closed their eyes to the varieties of dehumanization and extermination” they enable and promote.

Friedrich Nietzsche argued that Christianity had inverted an aristocratic moral order in which strength was good and weakness was bad, replacing it with a “slave morality” that privileged the weak and stigmatized the strong. He called this process a “transvaluation”—the process by which one value system comes to eclipse another. According to Mumford, a similar transvaluation took place in the modern era when we ceased to value technology in the service of human goals and values and came instead to regard the efficiency and productivity obtained through technical innovation as an end in itself, to which humans should subordinate themselves.

The consequence of this transvaluation is that matters of human flourishing are, as a practical matter, largely irrelevant to the embrace of technology. We can think, for example, about traditional measures of human flourishing in today’s technologically advanced societies. The members of these societies are less likely to get married, are having fewer children, have fewer close friends, have weaker social ties, take part in fewer communal activities, and worship less. This decline has happened amidst an explosion in technologies that are marketed as pro-social, offering means to connect people across distances, while also promising to eliminate the mundane tasks that interfere with our flourishing.

The point here is not that there is a clear causal link between social media or e-commerce platforms and these trends. It is rather that when we adopted these technologies, novelty and market success took priority over any consideration of whether they would promote older ideas of the good life. As Mumford observed, “society submits meekly to every new technological demand and utilizes without question every new product whether it is an improvement or not, since under the present dispensation the fact that the proffered product is the result of a new scientific discovery or a new technological process is the sole proof required of its value.”

“That something saves time is seen as a good in itself.”

Mumford worried that this process of transvaluation was making humans more machine-like. Machine values overtake what he calls “life values,” with the dollar becoming the measure of all things. We come to genuinely desire and covet that which makes us more “productive.” That something saves time is seen as a good in itself, regardless of its effect on other measures of human flourishing.

The myth of the machine suffuses contemporary discussions of artificial intelligence. Increasingly, we hear this faith expressed in overtly religious language. Ideas such as superintelligence or the singularity are often presented as an apocalyptic endpoint of technological development, the stage at which all human problems can be resolved by benevolent robots. If we reach this point, current concerns about human flourishing fade into insignificance in view of the bounty we will reap.

The myth’s secular theodicy appears in present discussions of real or potential harms from AI. Negative effects of its use in schools and universities, such as cognitive offloading, tend to be attributed to a human failure to adapt, or are taken as a sign that our educational institutions are hopelessly obsolete and need to be brought up to date. Similarly, workers who fear being replaced by AI are told they are inadequately prepared for the evolution of the workplace. Hence, policymakers propose legislation to help “workers effectively use AI tools to boost productivity and remain competitive” and ensure workers are “adequately skilled, confident and ready to grasp the full opportunities of AI.” The benefits are there, if we can only overcome human incompetence.

AI models have one undeniable virtue: the increase in speed and efficiency with which they can carry out tasks that were once the province of human beings. Language models can produce functional text for a wide range of contexts, while image generation models are giving us the capability to render into existence whatever image or video takes our fancy. This is widely taken as clear evidence of the benefits of AI. For Mumford, this type of thinking is precisely the problem. The myth of the machine is dehumanizing because it subordinates human values to machine values: speed and efficiency.

“It subordinates human values to machine values: speed and efficiency.”

The most striking evidence of the myth’s cultural pervasiveness is that many avid accelerationists do not deny that AI could mean the end of humanity. They merely differ from the doomers in believing that this risk is necessary—even desirable—to achieve the spectacular increases in efficiency and productivity promised by AGI. Mumford foresaw this extreme endpoint. “The myth of the machine,” he wrote, “the basic religion of our present culture, has so captured the modern mind that no human sacrifice seems too great provided it is offered up to the insolent Marduks and Molochs of science and technology.”

Those branded as skeptics or doomers also still accept the premises of the myth of the machine. The stated aim of many organizations concerned with avoiding the worst AI outcomes is that we should “realize the benefits while mitigating the risks” of the technology. Mumford would argue the first half of this statement concedes too much, accepting the basic premise of the myth of the machine while presenting the task as removing the obstacles to realize its benefits. Many skeptics also share a basic misanthropic premise of machine superiority, focusing as they do on the biased, irrational, and flawed nature of human beings that needs machinic augmentation.

Technological doom is not something that lies ahead of us. It has already arrived. The demise of humanity is an ongoing process by which we allow ourselves to be colonized by machine values, a “gradual disempowerment” that is happening all around us. AI should be seen not as a race to a promised land but as a journey every farther away from human flourishing.

Those concerned about AI risk should look beyond questions of policy. Background systems of belief, such as the myth of the machine, condition what we take for granted in our relationship to technology and delimit the possible in policy. Changing these systems opens up new imaginative horizons for action. “For those of us who have thrown off the myth of the machine,” Mumford wrote, “the next move is ours: for the gates of the technocratic prison will open automatically, despite their rusty ancient hinges, as soon as we choose to walk out.”